In this blog ill try to explain my understanding and intuition about the working of filters/kernels in CNNs.

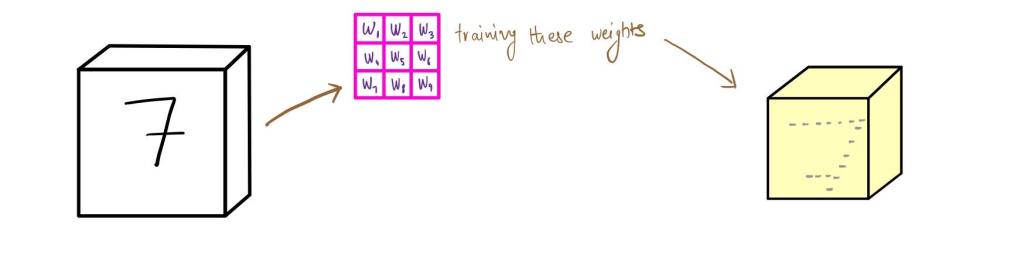

Suppose we take an RGB (3 channels) image of the number 7 and we are trying to identify what is the number by using CNNs then we apply a filter/kernel to it that gives us an output highlighting a certain feature and a part of the image by ignoring the other features of the image. This filter could be an edge detector or a corner detector and can highlight any feature of the image. The output image we get is particular to the filter and its weights.

As we apply more filters/kernels to the image, we get a number of output images from these filters that have detected various features of the images. These images are also smaller than the original image in its width and height.

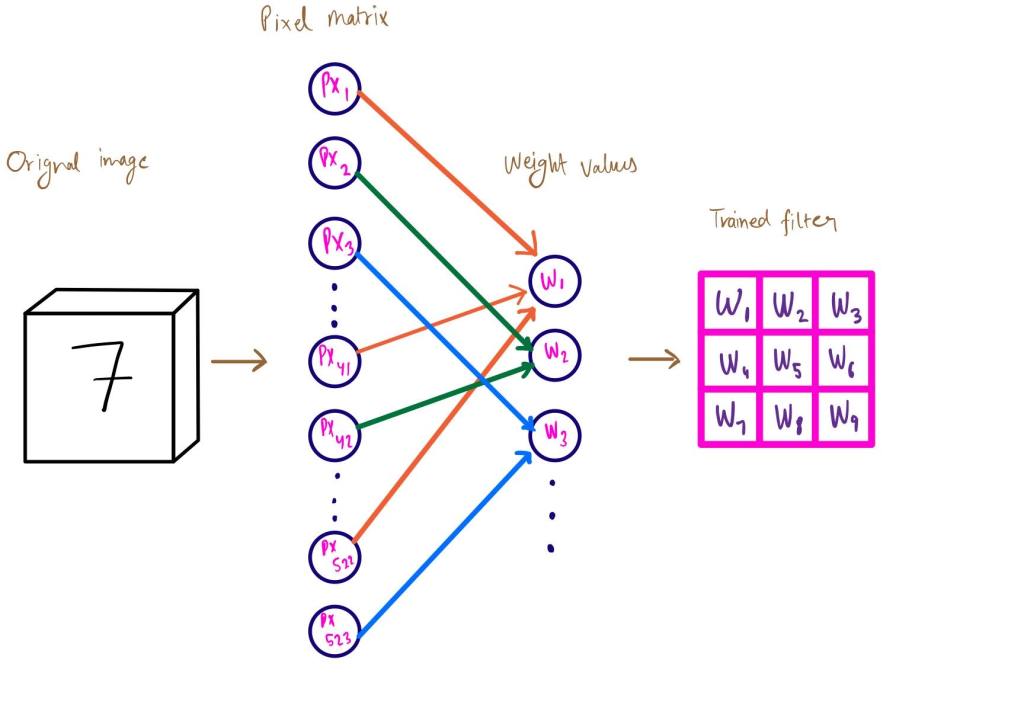

We can view the process of convolving the image as a step in a multi layer neural network itself. Suppose we stack all the pixel values of the original image as input units of the neural network and we connect these input units to the pixel values of the filter, i.e the weights; these weights can be seen as hidden units of a layer in the network. But the catch here is that not all of the input units are connect to every one of the weights since weights are only dependent on a smaller number of pixel values, i.e input units. As we see in the image below, not all input units/pixel values are connected to the weights/hidden units.

Because of these ‘missing connections’ or because of a fewer number of connections in this layer of the neural network, we get a layer that has less parameters to train as compared to a traditional neural network where all input units are connect to every one of the hidden units. This why CNNs is an optimized version of a traditional neural network as it understands the intuition behind analyzing an image.

Another part of CNNs that is important to understand is what we get out of using filters on our image. As we use filters on an image, we get a number of output images which we further feed to consequent layers of CNNs which eventually spilts up the image and its features to analyze them and classify the image.

As we back propagate, we train the weights in these filters to create/tune the filters to detect features and parts of the image that are important in the classification of the image. The video that I have attached below gives us a visualization of this process.

As we see filters help us so single out parts of the image and train our model to classify the original image.